Enterprise brands do not have one website to monitor in AI search - they have dozens or hundreds. A global consumer goods company might manage 80 product brand websites across 40 markets. A financial services group might track 30 subsidiary brands. A digital agency holding group might manage AI visibility for 150 client domains from a single operations team.

Manual AI visibility monitoring - which works reasonably well for a single brand - does not scale to enterprise portfolios. The tools, query architecture, reporting hierarchy, and operational workflows that work for one domain require fundamental redesign for portfolios at 10x, 50x, or 100x that scale.

This guide covers how enterprise teams build AI visibility management systems that actually work at portfolio scale.

The Enterprise AI Visibility Challenge

Large enterprises face four specific challenges that individual brand managers do not:

Query architecture at scale

A single brand might need 20 target queries for meaningful AI visibility monitoring. A portfolio of 100 brands needs a structured approach to query development - one that maintains consistency across brands for comparison purposes while allowing brand-specific customisation for different markets and product categories. Without a systematic query architecture, reporting becomes incomparable across the portfolio.

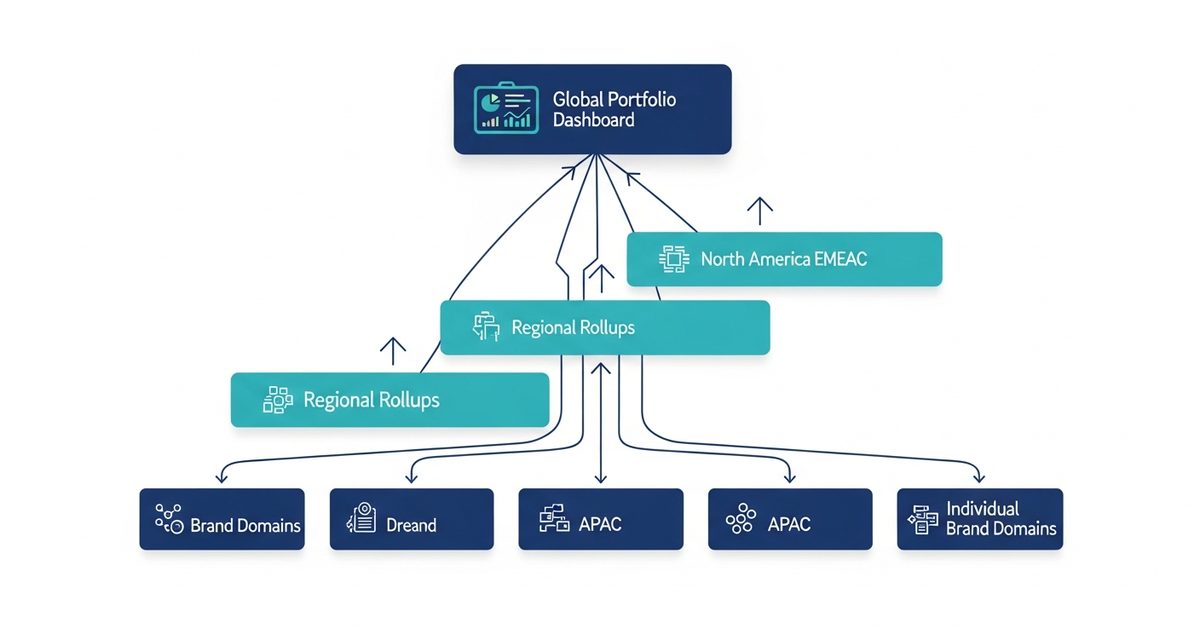

Hierarchical reporting requirements

Brand managers need brand-level detail. Regional directors need regional rollups. The CMO and executive team need portfolio-level benchmarks and trend lines. Building a single monitoring system that serves all three reporting levels requires deliberate hierarchy design from the start - not retrofitting individual brand reports into executive summaries after the fact.

Resource allocation decisions

Enterprise AI visibility programs need to direct content and technical investment where the citation gap is largest relative to commercial importance. Without portfolio-level visibility data, these decisions are made by recency and politics rather than data - the most recently audited brand gets the most attention, not the brand with the largest citation gap in the highest-value market.

Competitive benchmarking across categories

A CPG enterprise with 20 product brands across 5 categories needs competitive AI citation benchmarking per category - food brands do not compete against personal care brands for the same queries. Building competitive benchmarks that are meaningful at brand level and comparable at portfolio level requires a structured competitive set definition per brand family.

Building Your Enterprise Query Architecture

The foundation of scalable enterprise AI visibility management is a standardised query template that produces comparable data across brands while accommodating category-specific customisation.

Tier 1: Universal queries (run for every brand in the portfolio)

These establish baseline comparability across all brands:

"What is [brand name]?" - establishes training data depth and description accuracy

"Is [brand name] good?" - surfaces AI sentiment and positioning

"[Brand name] vs alternatives" - reveals competitive citation context

Tier 2: Category queries (customised per product/service category)

These drive commercial citation insight:

"Best [product category] for [primary use case]" - primary commercial citation query

"Compare top [category] options" - competitive landscape citation query

"What [category] do experts recommend?" - authority citation query

Tier 3: Brand-specific queries (5-10 per brand)

These capture specific use cases, product lines, and markets where individual brand strategy differs from category norms. Managed at brand level; not required for portfolio rollup reporting.

This three-tier architecture means every brand is tracked on comparable Tier 1 and Tier 2 queries - enabling portfolio-level benchmarking - while brand-specific detail is maintained in Tier 3 for brand managers.

Setting Up the Technical Infrastructure

At enterprise scale, manual monitoring is operationally impossible. The monitoring infrastructure needs to be automated across all engines and all brands in the portfolio.

AI Rank Lab's multi-domain management supports enterprise portfolio tracking by allowing multiple client domains to be managed from a single workspace, with the AI Visibility Tracker running automated weekly monitoring per domain across ChatGPT, Gemini, Claude, and Perplexity. For enterprise teams, this means:

All portfolio brands tracked simultaneously without per-brand manual effort

Consistent query sets run across all brands for comparable data

Weekly citation rate reports available at brand level for brand managers

Aggregate portfolio data available for regional and executive rollup reporting

The monitoring configuration should mirror the reporting hierarchy: group brands by region, category, or subsidiary structure so that portfolio rollup reporting can be generated at the appropriate level without manual aggregation.

Enterprise Reporting Framework

Three reporting levels serve different enterprise stakeholders:

Brand-level report (monthly, for brand managers)

Metrics: citation rate per AI engine, citation rate by query tier, top and bottom performing queries, month-over-month changes, competitive citation rate for the brand's primary competitive set. This report drives the content and technical investment decisions at brand level.

Regional/category rollup (monthly, for regional directors and category heads)

Metrics: aggregate citation rate across all brands in the region or category, ranking of brands by citation rate and by citation gap opportunity, regional competitive summary. This report enables resource allocation decisions: which brands in the region have the largest citation gap relative to competitive standing.

Portfolio executive summary (quarterly, for CMO and leadership)

Metrics: portfolio AI visibility score (aggregate weighted citation rate), quarter-over-quarter trend, share of AI voice vs. key portfolio-level competitors, top five citation gap opportunities by commercial value. This report connects AI search investment to commercial outcomes and competitive positioning at a level meaningful to executive stakeholders.

Resource Allocation Prioritisation

Enterprise AI visibility programs work best when investment is directed by a systematic prioritisation framework. A simple scoring model for prioritising brands for active AEO intervention:

Factor | Weight | Data source |

|---|---|---|

Brand revenue contribution | 40% | Finance |

Current AI citation gap (vs. top competitor) | 30% | AI Rank Lab tracker |

AI search query volume for category | 20% | Keyword research |

Existing SEO content strength | 10% | AEO audit score |

Brands scoring highest on this composite score - high revenue, large citation gap, high query volume - receive active intervention: new AEO content, schema markup implementation, and structural page optimisation. Lower-scoring brands receive passive monitoring until their commercial priority or citation gap shifts.

Common Enterprise AI Visibility Mistakes

Treating AI visibility as a one-brand problem. Enterprise AI visibility programs that start with the flagship brand and never scale to the portfolio miss the citation gaps that accumulate across smaller brands - often in markets where no brand manager has the visibility mandate to identify them.

Using inconsistent query sets across brands. Brands monitored on different queries cannot be compared in portfolio reporting. Standardise Tier 1 and Tier 2 query templates before deploying monitoring at scale.

Reporting only to brand managers. Executive-level AI visibility reporting connects the investment to business outcomes and competitive positioning in a way that sustains budget. Without it, AI visibility programs are cut in budget cycles before they produce measurable returns.

Monitoring only Google AI Overviews. Enterprise teams that use only BrightEdge or Conductor for AI visibility monitoring have comprehensive Google AI Overview data but no visibility into ChatGPT, Perplexity, or Claude - the channels where AI-influenced purchase decisions are increasingly made for many product categories.

For enterprise teams building AI visibility management at portfolio scale, the combination of AI Rank Lab's multi-domain tracking and the reporting framework outlined here provides the operational infrastructure for systematic, scalable management of AI search visibility across complex brand portfolios.

Contact AI Rank Lab to discuss enterprise plans and multi-domain configuration for portfolios above 10 domains.

Frequently Asked Questions

How do enterprises manage AI visibility across hundreds of brands?▾

What is a reasonable number of queries per brand for enterprise AI monitoring?▾

How do you build the business case for enterprise AI visibility investment?▾

Get a Free AI Ranking Consultation

Want to improve your brand's visibility in AI search engines like ChatGPT, Gemini, and Perplexity? Fill out the form and our experts will create a personalized strategy for you.

Written by

Devanshu

AI Search Optimization Expert