In 2024, a new web standard was proposed: a file called llms.txt that would tell AI language models what they can and cannot do with your website's content. The concept spread quickly through the SEO and AEO community, spawning blog posts, implementation guides, and healthy debate about whether it matters at all.

Here is an honest, up-to-date answer to every question businesses are asking about llms.txt: what it is, what problem it actually solves, which AI systems respect it, and what creating one will and will not do for your AI search visibility.

What Is LLMs.txt?

LLMs.txt is a plain text file placed at the root of a website (at yourdomain.com/llms.txt) that provides structured guidance to large language models about the site's content. The proposed standard was introduced by Jeremy Howard in 2024 as an AI-era analogue to robots.txt.

The concept draws from two existing conventions:

robots.txt - Tells search engine crawlers which pages to index and which to skip

humans.txt - An informal standard for sharing information about the humans behind a website

LLMs.txt extends these ideas to LLMs specifically - providing a structured, machine-readable file that an AI system could read to understand the site's content structure, key pages, and any guidance about how the content should or should not be used in AI-generated responses.

What Does an LLMs.txt File Look Like?

The proposed format uses Markdown. A basic example:

# AI Rank Lab

> AI visibility tracking and AEO optimisation platform for businesses and agencies.

## Key Pages

- [How It Works](https://airanklab.com/how-it-works): Overview of how AI citation tracking works

- [Pricing](https://airanklab.com/pricing): Subscription plans and agency tiers

- [Blog](https://airanklab.com/blog): Guides on AEO, GEO, and AI search visibility

## Documentation

- [API Docs](https://airanklab.com/api-docs): Developer documentation for the AI Rank Lab API

## About

- [About](https://airanklab.com/about): Company background and mission

## Optional: llms-full.txt

- [Full content](https://airanklab.com/llms-full.txt): Complete site content in a single LLM-optimised fileA companion file, llms-full.txt, is sometimes created alongside the index file - a single large document containing all the site's content in clean, LLM-readable format (stripped of navigation, ads, and HTML boilerplate). The idea is that an AI system could read the full content file to get a comprehensive view of the site without needing to crawl every page individually.

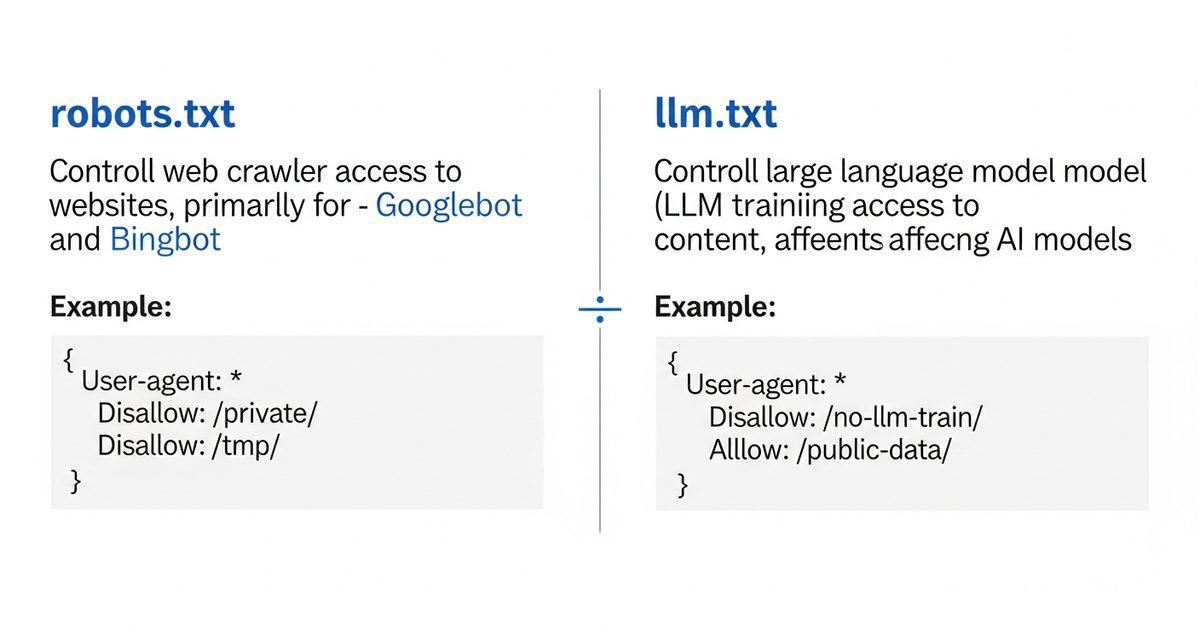

How LLMs.txt Differs from robots.txt

Feature | robots.txt | llms.txt |

|---|---|---|

Primary audience | Search engine crawlers (Googlebot, Bingbot) | Large language models and AI systems |

Primary function | Allow/disallow page crawling | Structure and guide AI content consumption |

Format | Directive-based syntax (User-agent, Allow, Disallow) | Markdown with page descriptions and links |

Industry standard | Established, universally respected | Proposed standard, adoption varies by AI system |

Effect on indexing | Direct - Googlebot will not crawl disallowed paths | Indirect - LLMs may or may not read or follow it |

Required for AEO | Not directly, but affects indexability | Not required, but potentially beneficial |

The critical difference is enforcement. robots.txt disallow directives are consistently respected by major search engine crawlers - there are real consequences (being blacklisted or penalised) for AI crawlers that ignore them. LLMs.txt has no equivalent enforcement mechanism. An AI system may read it, use it, ignore it, or never know it exists depending on how that system retrieves and processes web content.

Do Major AI Engines Actually Use LLMs.txt?

This is the most practically important question, and the honest answer is: it depends on the system, and it is evolving.

As of early 2026:

ChatGPT (when browsing): ChatGPT's browsing mode retrieves web content in real-time. Whether it specifically reads and acts on llms.txt files is not officially documented by OpenAI, but the format can influence how content is parsed and prioritised when pages are retrieved.

Perplexity: Perplexity actively crawls the web for its retrieval-augmented generation. There is growing evidence that Perplexity's crawler (PerplexityBot) respects llms.txt guidance, though this is not formally documented in their public policies.

Claude (Anthropic): Anthropic has not made public statements about llms.txt support in their crawling infrastructure. Claude's training data is pre-cutoff; its real-time retrieval features may handle llms.txt differently.

Google Gemini: Google's AI systems primarily draw from Google's existing web index. LLMs.txt has no documented effect on how Google's systems process content for Gemini responses.

Training data crawlers: Several AI companies operate web crawlers for training data collection (Common Crawl, GPTBot, ClaudeBot, Googlebot-extended). These crawlers may read llms.txt as a signal, but the standard is not universally adopted in training data pipelines.

The practical position: llms.txt adoption by AI systems is growing but inconsistent. Creating the file is low-effort and positions your site for better AI compatibility as adoption increases - but it is not a guaranteed citation rate lever in the way that schema markup or content structure are.

What LLMs.txt Can and Cannot Do for AI Visibility

What it can do

Help AI systems that do read it understand your site's content architecture and key pages

Signal to AI crawlers that you are actively thinking about AI-era content accessibility

Provide a clean, structured entry point to your content for AI systems that process the file

If you create an llms-full.txt companion file, give AI systems access to clean, boilerplate-free versions of your content that are easier to process and cite

Potentially improve citation rates on Perplexity, which appears to be the most active early adopter of the standard

What it cannot do

Force ChatGPT, Gemini, or Claude to cite your content in responses

Replace the impact of schema markup, content structure, and topical authority on AI citations

Block AI engines from using your content if they are not designed to respect the standard

Guarantee improved citation rates without the underlying content quality that drives citations

Substitute for the content-level optimisations that are the primary drivers of AI visibility

How to Create an LLMs.txt File

Creating a basic llms.txt file takes 30-60 minutes for most sites. Here is the process:

Step 1: Create the file

Create a plain text file named llms.txt (all lowercase) and place it at your site's root so it is accessible at https://yourdomain.com/llms.txt. For most CMS platforms:

WordPress: Upload to the root directory via FTP or file manager, or use a plugin

Webflow: Add as a static file through the project's file manager

Shopify: Add as a theme file or use an app

Next.js / custom sites: Place in the

/publicdirectory

Step 2: Write the file content

Follow the proposed format:

# [Your Brand Name]

> [One to two sentence description of what your site/business is]

## [Section 1: e.g., Core Pages]

- [Page Title](URL): Brief description of what this page contains

- [Page Title](URL): Brief description

## [Section 2: e.g., Blog / Resources]

- [Page Title](URL): Brief description

## Optional

- [llms-full.txt](https://yourdomain.com/llms-full.txt): Complete site contentFocus on your most important and highest-quality pages. The file should prioritise pages that you want AI systems to use as sources - your most authoritative content, your product pages, your expert guides.

Step 3: Keep it updated

LLMs.txt is only useful if it reflects current site content. Add a process to update it when you publish significant new content or restructure your site. A quarterly review is the minimum; monthly is better if your content volume is high.

Step 4: Optionally create llms-full.txt

For sites with a manageable content volume, creating an llms-full.txt file provides AI systems with a single, clean document containing all your key content. The file should be stripped of HTML, navigation, ads, footers, and other boilerplate - just clean prose. This is most practical for sites with fewer than 50 core pages; for larger sites, it becomes difficult to maintain and too large to be useful.

Should You Prioritise LLMs.txt or Schema Markup?

If you are allocating limited time between llms.txt and schema markup, schema markup should take priority. Schema markup (particularly FAQPage, Article, and Organization schemas) has documented, measurable effects on AI citation rates across all major AI engines. LLMs.txt adoption by AI systems is still emerging and its citation rate impact is less consistent.

The good news is that these are not competing priorities. Creating a basic llms.txt file takes one to two hours. Implementing comprehensive schema markup across your key pages is a multi-week project. Do both - but allocate effort in proportion to documented impact.

LLMs.txt in the Context of Full AEO Implementation

LLMs.txt is one component of a comprehensive AI search optimisation approach, not a standalone fix. The full technical AEO stack for a site serious about AI citation rates includes:

Schema markup: FAQPage, Article, Organization, and product-relevant schemas - the highest-impact technical lever for AI citations

Content structure: Direct-answer H2/H3 formatting, question-led headings, explicit claims backed by data - the content-level equivalent of schema

Technical accessibility: Page speed, clean HTML, no JavaScript-dependent content that blocks AI crawlers

LLMs.txt: Structured AI-readable site map and optional full-content file

robots.txt: Ensure you are not accidentally blocking AI crawlers (GPTBot, PerplexityBot, ClaudeBot) in your robots.txt disallow rules

Check your robots.txt now. Many sites have broad disallow rules that inadvertently block legitimate AI crawlers, reducing citation rates across all AI engines. Allowing the major AI crawlers while blocking unwanted bot traffic requires explicit allow rules for the crawlers you want to permit.

The Practical Verdict

LLMs.txt is a legitimate and worthwhile addition to your AI visibility technical stack. It takes minimal time to implement, aligns your site with an emerging standard that AI systems are increasingly adopting, and signals technical credibility to AI-era crawlers. It is not a silver bullet for citation rates, and it will not substitute for content quality and schema markup. But it costs you almost nothing to implement and could provide incremental citation rate benefits as AI system adoption of the standard grows.

Implement it as part of your broader AEO technical checklist, measure whether Perplexity citation rates change following implementation, and revisit as AI system adoption of the standard becomes clearer. If you are tracking your AI citation rates across ChatGPT, Gemini, Perplexity, and Claude, you will be able to see whether the file contributes to measurable improvement - without needing to rely on speculation. AI Rank Lab tracks citation rates across all four engines, making pre/post testing of any technical change straightforward.

Frequently Asked Questions

What is an llms.txt file?▾

Does llms.txt actually improve AI search visibility?▾

How is llms.txt different from robots.txt?▾

Which AI crawlers does llms.txt affect?▾

Should I create llms-full.txt as well as llms.txt?▾

Get a Free AI Ranking Consultation

Want to improve your brand's visibility in AI search engines like ChatGPT, Gemini, and Perplexity? Fill out the form and our experts will create a personalized strategy for you.

Written by

Devanshu

AI Search Optimization Expert