When someone asks Perplexity AI "What is the best [tool/service] for [your use case]?", one of three things happens: your brand is cited, a competitor is cited, or no brand is cited. The same dynamic plays out in Google Gemini, Claude, and ChatGPT simultaneously - across thousands of queries in your market, every day.

Most brands have no systematic way to know which outcome is happening. They check manually, infrequently, and inconsistently - getting snapshots rather than a continuous picture of their AI search visibility across the engines where their potential customers are actively searching.

This guide covers how to set up comprehensive brand monitoring across Gemini, Perplexity, and Claude - including what to monitor, how to interpret what you find, and how to move from manual spot-checks to automated continuous tracking.

Why Monitor Each AI Engine Separately

Gemini, Perplexity, and Claude are not interchangeable. They have different training data cutoffs, different retrieval architectures, different citation biases, and different user bases. A brand that appears consistently in Perplexity responses may be virtually absent from Claude responses for the same queries. Understanding this variation is essential for both accurate measurement and effective optimisation.

Perplexity AI - uses real-time web retrieval for most queries, making it highly responsive to current content. It always shows its sources, making citation verification straightforward. 780 million+ monthly queries, growing at 370% per year. Particularly strong in tech, finance, and research-heavy queries.

Google Gemini - combines Google's search index with generative AI capabilities. Results correlate more closely with Google's organic rankings than other AI engines, but are not identical. 2 billion monthly visits. Strong across general consumer and commercial queries.

Claude (Anthropic) - used heavily in enterprise and professional contexts. Training data and response characteristics differ from OpenAI's models. Approximately 20 million active users, growing. Particularly relevant for B2B brands whose target audience includes knowledge workers.

ChatGPT - the largest AI engine by user count. Covered separately in our ChatGPT brand visibility guide but should be included in any comprehensive monitoring setup.

Building Your Brand Monitoring Query List

Effective AI brand monitoring starts with a structured query list that represents how your target audience actually searches. Build queries across four categories:

Category queries

These are the most important for brand visibility measurement. They show whether your brand is cited when users are actively researching options in your market - the highest-intent discovery moment.

"What is the best [your product/service category] for [primary use case]?"

"Which [category] should I use?"

"Compare the top [category] options"

"What [category] do experts recommend?"

Problem-solution queries

These reveal whether AI engines connect your brand to the problems it solves - critical for early-funnel visibility.

"How do I [solve the specific problem your product addresses]?"

"What is the best way to [achieve the outcome your product delivers]?"

"Tools for [task your product helps with]"

Direct brand queries

These show how AI engines describe your brand - what they say about it, whether the description is accurate, and whether key differentiators are represented.

"What is [your brand name]?"

"What does [your brand name] do?"

"Is [your brand name] good?"

"[Your brand name] reviews"

Competitive queries

These show how you are positioned relative to competitors in AI-generated comparisons.

"[Your brand] vs [competitor brand]"

"Alternatives to [competitor brand]" (check if you are listed)

"[Competitor brand] vs other options"

Start with 15-20 queries covering the highest-priority examples in each category. Review and expand the list quarterly as you discover new query patterns that are generating relevant responses.

Setting Up Manual Monitoring on Each Engine

Monitoring your brand on Perplexity AI

Perplexity is the easiest engine to monitor manually because it always displays its sources. Go to perplexity.ai and run each query from your list. For each response:

Note whether your brand name appears in the generated text

Check the source citations shown below the response - are any from your domain?

Note which competitor brands are cited

Record how your brand is described if it does appear

Screenshot the full response for your records

Perplexity's real-time retrieval means results are more volatile than other engines - new content published today can influence citations within hours. This makes Perplexity both the most responsive engine to content improvements and the one requiring more frequent monitoring to detect changes.

Monitoring your brand on Google Gemini

Access Gemini at gemini.google.com and run the same query list. Gemini's responses draw from Google's index, so its citations tend to correlate with Google organic rankings more than Perplexity does. Key things to note in Gemini responses:

Whether your brand appears in the generated text

Whether Gemini uses the "Google it" source chip to link to your site

How Gemini frames your brand relative to competitors

Whether Gemini's description of your brand matches your positioning and approved messaging

Gemini results can vary between signed-in and signed-out states, and between users in different regions. For comprehensive monitoring, run queries in an incognito window to get the baseline non-personalised response.

Monitoring your brand on Claude

Access Claude at claude.ai and run your query list. Claude typically does not use real-time web retrieval by default (though this varies by version and configuration), meaning responses draw primarily from training data. This makes Claude monitoring useful for understanding your baseline training data representation - how AI models that do not have live retrieval perceive your brand based on historical web content.

When monitoring Claude:

Note whether your brand appears and in what context

Pay attention to the specificity of information Claude provides about your brand - vague or generic descriptions may indicate limited training data presence

Compare Claude's response to Perplexity's for the same query - differences reveal where live retrieval changes citation outcomes versus training data alone

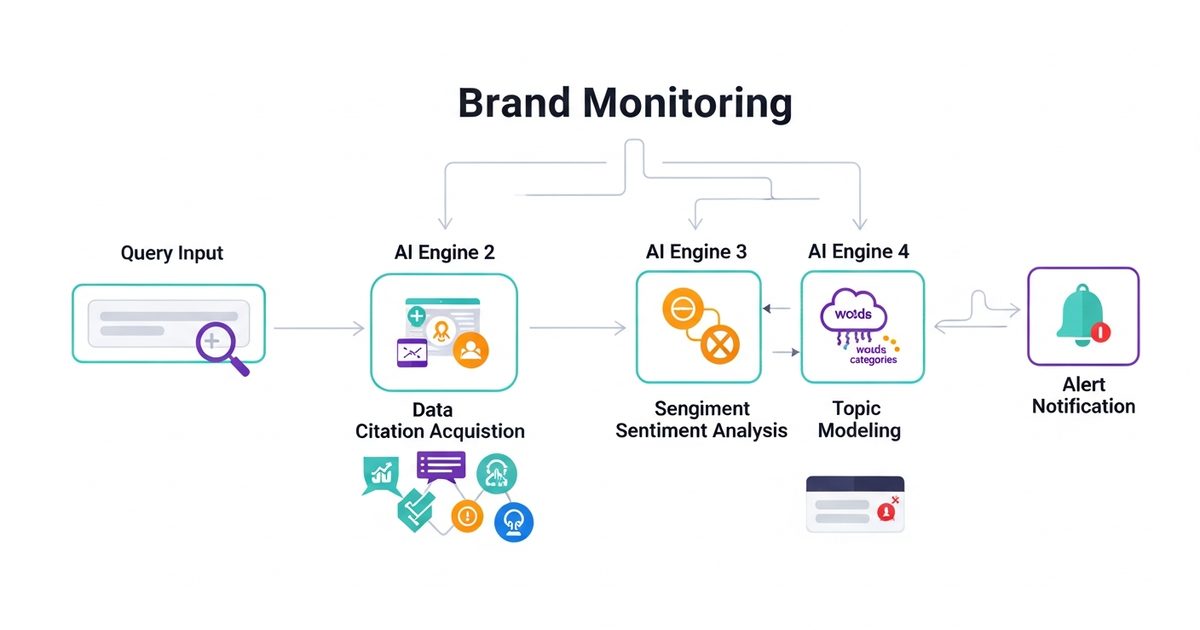

Moving to Automated Brand Monitoring

Manual monitoring is sufficient for an initial assessment but does not scale to ongoing brand tracking across 15-20 queries, four AI engines, and weekly monitoring cadence. The manual process for a single brand takes two to three hours per monitoring cycle - unsustainable as a regular practice.

AI Rank Lab's AI Visibility Tracker automates this entire process. You add your domain, enter your target queries, and the platform runs them automatically across ChatGPT, Gemini, Claude, and Perplexity on a weekly basis. The dashboard shows:

Your citation rate per engine per query over time

Which queries are producing citations and which are not

How your citation rate compares to competitor brands on the same query set

Week-over-week changes that signal when something has shifted - positive or negative

For brands monitoring multiple product lines, multiple geographic markets, or multiple competitive sets, the ability to run comprehensive automated tracking rather than manual spot-checks is the difference between knowing your AI visibility status and guessing at it.

What to Do With What You Find

Brand monitoring data is only useful if it informs action. Once you have established your baseline monitoring, the findings typically fall into three response categories:

You appear but are described inaccurately

When AI engines cite your brand but misrepresent it - wrong use case, outdated features, incorrect positioning - the fix is improving the quality and completeness of the authoritative source content about your brand on your own website and in your official public communications. Ensure your homepage, about page, and key product pages clearly state what your brand does, who it is for, and what differentiates it - in language that is structured for AI extraction with clear headings and schema markup.

You do not appear on high-priority queries

Absence from category and problem-solution queries is the most commercially significant monitoring finding. Address it by: running a full AEO audit to identify the structural gaps preventing citation, creating AEO-optimised content specifically targeting the query patterns where you are absent, and building third-party presence on the review and editorial platforms that AI engines draw from for those query types.

Competitors appear instead of you

When specific competitors consistently appear for queries where you do not, analyse what they are doing differently. Check their content structure on those topics, their schema markup coverage, and their third-party mention presence. The competitive citation analysis in AI Rank Lab's tracker shows you precisely which competitors are cited for which queries - the starting point for closing the citation gap systematically.

Monitoring Cadence Recommendations

Weekly automated tracking - for all priority queries via AI Rank Lab, covering all four engines simultaneously

Monthly manual deep-dive - spot-check 5-10 high-priority queries manually across engines to verify automated findings and observe full response context

After AI model updates - major model updates from OpenAI, Google, and Anthropic can significantly shift citation patterns. Run a full manual check of your most important queries within a week of any major model release.

After significant content changes - when you publish major new content or restructure key pages, check citation rates for the affected queries to verify that changes are having the intended impact

AI brand monitoring is not a one-time project. Citation rates change continuously as models update, competitors publish new content, and your own content and authority evolve. Building monitoring into your regular workflow - with AI Rank Lab automating the ongoing tracking layer - ensures you maintain visibility into the channels where your potential customers are increasingly searching first.

Set up your free AI Rank Lab account and add your brand to the AI Visibility Tracker today. Your baseline citation rate across Gemini, Perplexity, Claude, and ChatGPT will be ready within your first reporting cycle.

Frequently Asked Questions

How do I monitor my brand on Perplexity AI?▾

Why does my brand appear in ChatGPT but not Perplexity (or vice versa)?▾

How often should I check my brand on Gemini and Claude?▾

What should I do if AI engines describe my brand inaccurately?▾

Is AI brand monitoring different from social media brand monitoring?▾

Get a Free AI Ranking Consultation

Want to improve your brand's visibility in AI search engines like ChatGPT, Gemini, and Perplexity? Fill out the form and our experts will create a personalized strategy for you.

Written by

Devanshu

AI Search Optimization Expert