An AI search audit answers one question: why is this site being cited by AI engines at the rate it is, and what would change to improve that rate? Without a structured audit, AEO optimisation becomes guesswork - adding schema markup here, rewriting a heading there, without a clear picture of what is actually limiting citations.

This is the audit template used for AI visibility engagements. It covers every dimension that affects AI citation rates, produces a prioritised finding list, and is repeatable across clients in any industry. Work through the sections in order - each builds on the previous.

Before You Start: Establish Scope

Define these before running the audit:

Target AI engines: Which of the four (ChatGPT, Gemini, Perplexity, Claude) matter most for this client's audience? For B2B clients, all four matter. For consumer brands, prioritise ChatGPT and Perplexity. Run the full audit across all four.

Core keyword set: 10-20 queries representing how the client's potential customers research their category in AI engines. Include category research queries, competitor comparison queries, and problem-solution queries.

Key competitors: 3-5 direct competitors to benchmark against. Competitor AI visibility data contextualises the client's performance.

Audit period: Baseline citation rates are measured over 2-4 weeks to account for AI response variability. Immediate spot-checks are useful; statistical rates require repeated measurement.

Section 1: Baseline Citation Rate Measurement

This is the foundation of the audit. Without a baseline, nothing else has context.

1.1 Current citation rate by engine

For each of the 10-20 target queries, record whether the client brand is cited in the AI engine's response. Run each query at minimum 3 times across different sessions to account for response variability. Calculate citation rate as: (number of queries with citation / total queries measured) x 100.

Record in a table: Query | ChatGPT rate | Gemini rate | Perplexity rate | Claude rate | Notes

1.2 Citation type breakdown

For queries where the client is cited, record how they appear:

Direct recommendation: AI names the client brand as a recommended tool or service provider

Source citation: AI cites client content as a source but does not recommend the brand directly

Comparison mention: AI mentions the client in a comparison context ("Brand X versus Brand Y")

Negative mention: Client is cited in a context that does not serve the brand (rare but worth flagging)

1.3 Competitor citation rates

Run the same queries for each of the 3-5 competitor brands. Record citation rates by engine. This produces the competitive visibility map - where the client sits relative to competitors on each AI engine and each query type.

Key question: For which queries are competitors being recommended while the client is absent? These are the highest-priority optimisation targets.

1.4 AI Overview presence (Google)

Check Google AI Overviews for the core keyword set. Record: which queries trigger an AI Overview, whether the client is cited in the Overview, and whether competitor content is cited. Use an incognito browser to minimise personalisation effects.

Section 2: Content Structure Analysis

AI engines cite content that directly answers questions. This section assesses whether the client's content is structured to be citable.

2.1 Heading structure audit

For each key page (product pages, service pages, and top-performing blog content):

Do H2 and H3 headings match the question format of target queries? ("What is X" rather than "About X")

Does each H2 section deliver a direct answer within the first 1-2 sentences beneath the heading?

Are headings specific enough to be citable? ("How to improve AI citation rates" is citable; "Tips for Better Results" is not)

2.2 Direct-answer paragraph audit

AI engines pull answer snippets. Check whether the content includes:

Definition paragraphs that state what something is in the first sentence

Process paragraphs that state how many steps or what the outcome is upfront

Comparison content that explicitly compares options (rather than describing each option separately)

Statistic and data claims with sources cited (AI engines favour content with cited evidence)

2.3 Conversational query coverage

Map each of the 10-20 target queries to existing content. For each query:

Is there a page or section that directly answers this query?

Does that page use the query's exact phrasing or close variants in headings?

Is the answer complete within the page, or does it require the user to navigate elsewhere?

Queries with no matching content are content creation opportunities. Queries with weak matches are content improvement opportunities.

2.4 Content freshness check

AI engines favour current content. Check:

Last-modified dates on key pages

Whether statistical claims, product features, or market data are current (not 2022-era)

Whether pages include explicit publication or update dates visible to crawlers

Section 3: Schema Markup Review

Schema markup is the highest-impact technical lever for AI citations. This section is non-negotiable.

3.1 Existing schema inventory

Use Google's Rich Results Test or a schema validator to check which schema types are currently implemented across key pages. Record: page URL | schema types present | validation errors.

3.2 FAQPage schema audit

FAQPage schema is the single most impactful schema type for AI citation rates. Check:

Is FAQPage schema present on product pages, service pages, and key blog posts?

Do the FAQ questions in schema match the actual conversational queries users ask in AI engines?

Are FAQ answers complete and direct (answerable without visiting the page)?

Are FAQ questions genuinely distinct, or are they variations of the same question?

3.3 Article and Organization schema

Check for:

Article schema on all blog content (including datePublished, dateModified, author, publisher)

Organization schema at the site level (name, URL, logo, description, sameAs social profiles)

Person schema for named authors with expertise claims (relevant for EEAT signals)

Product or Service schema where applicable

3.4 Schema gap prioritisation

Rate schema gaps by impact: FAQPage gaps on high-traffic pages = Critical; Article schema gaps = High; Organization schema missing = High; other schema types = Medium.

Section 4: Technical Accessibility Audit

AI crawlers cannot cite content they cannot access. This section checks whether technical barriers are limiting citations.

4.1 AI crawler access

Review robots.txt for disallow rules that might block known AI crawlers. Check for explicit blocks of:

GPTBot (OpenAI)

PerplexityBot

ClaudeBot (Anthropic)

Google-Extended

Bingbot (used by some AI systems)

Many sites have broad disallow rules or wildcard blocks that inadvertently exclude these crawlers. Ensure the crawlers you want to allow are explicitly permitted.

4.2 JavaScript rendering

Check whether key content on product and service pages is rendered in JavaScript. Content that requires JavaScript execution to appear is less reliably indexed by AI crawlers than server-rendered or static HTML content. Use the View Source test: if the content does not appear in raw HTML source, it is JavaScript-rendered.

4.3 Page speed and Core Web Vitals

AI engine citation rates correlate with content quality signals, and page speed is one of them. Check Largest Contentful Paint, Cumulative Layout Shift, and Interaction to Next Paint for key pages using PageSpeed Insights. Flag pages below threshold (LCP above 4 seconds, CLS above 0.25) for remediation.

4.4 LLMs.txt presence

Check whether the site has an llms.txt file at the root URL. If not, flag as a low-effort, medium-priority recommendation. If yes, verify the file is current and includes key pages with accurate descriptions.

Section 5: Third-Party Signal Audit

AI engines draw on the broader web - review sites, news mentions, industry directories - not just the client's own content. This section assesses the off-site signal landscape.

5.1 Review platform presence

Check the client's presence and review volume on platforms that AI engines draw from: G2, Capterra, Trustpilot, Google Reviews, Clutch (for agencies), TripAdvisor (for hospitality), Healthgrades (for healthcare). Low review volume on major platforms is a citation rate suppressor.

5.2 Press and publication mentions

Search for brand mentions in industry publications, news sources, and recognised directories. AI engines weight mentions from authoritative sources more heavily. Assess: volume of mentions, quality of publication sources, recency.

5.3 Wikipedia and knowledge graph presence

Check whether the brand, founding team members, or key products have Wikipedia pages or Google Knowledge Panel entries. Established knowledge graph entries significantly increase AI citation likelihood for branded queries.

Section 6: Prioritised Action List

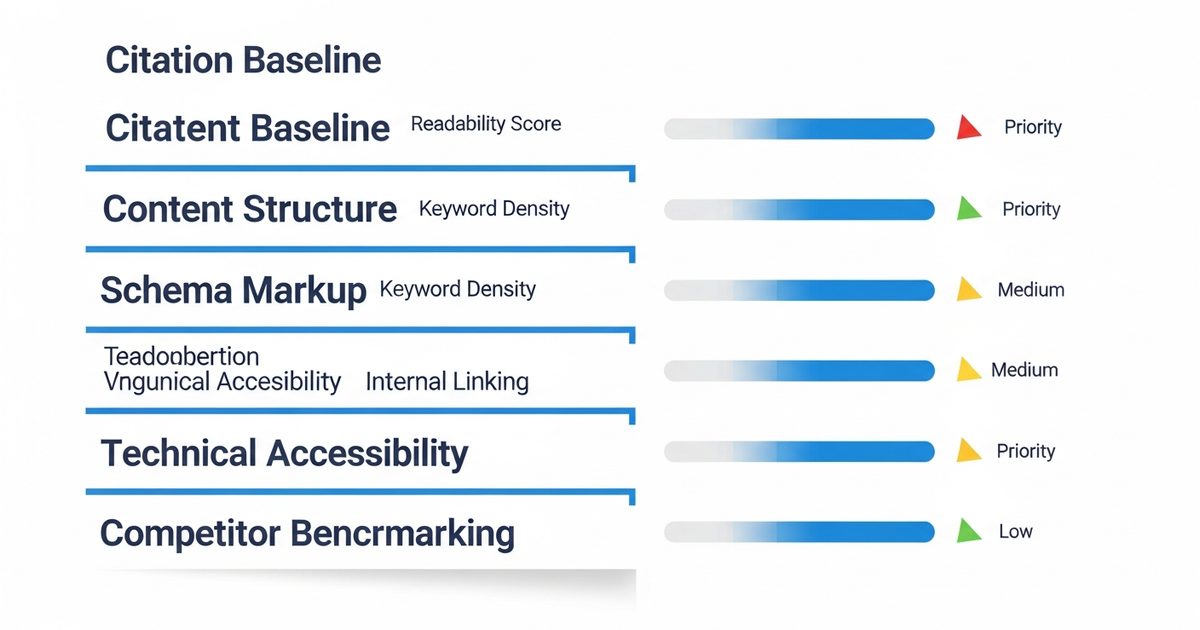

The audit findings feed into a prioritised action list. Use this priority framework:

Critical (fix within 2 weeks):

AI crawler blocked in robots.txt

Zero FAQPage schema on key commercial pages

Key content not accessible (JavaScript rendering blocking crawl)

High (fix within 30 days):

Organization schema missing

Key query types with no matching content

Article schema missing on blog posts

Competitor citation gap on high-intent queries

Medium (address within 60 days):

Heading structure not aligned with conversational query format

Content freshness issues on key pages

Low review platform presence

LLMs.txt missing

Low (ongoing improvement):

Content depth expansion on partially-covered topics

Press and publication mention building

Statistical data updates across existing content

Reporting the Audit Findings

A well-structured audit report includes: the baseline citation rate table with competitive context, a finding summary organised by section and priority, the prioritised action list with owner and timeline for each action, and the measurement plan - how and when citation rate progress will be tracked against the baseline.

The measurement plan is essential. Without pre-agreed measurement cadence, there is no way to demonstrate that the optimisation actions are working. Establish the baseline in the audit, define the next measurement point (typically 60 days post-implementation), and agree on what constitutes meaningful improvement.

For running the baseline measurement and ongoing tracking, AI Rank Lab's citation tracking across ChatGPT, Gemini, Perplexity, and Claude provides the automated measurement infrastructure that makes repeatable audits practical. Start with a free baseline measurement before running the rest of the audit sections.

Frequently Asked Questions

What is an AI search audit?▾

How long does an AI search audit take?▾

What is the most important part of an AEO audit?▾

How do you measure AI citation rates for an audit?▾

How often should an AI search audit be run?▾

Get a Free AI Ranking Consultation

Want to improve your brand's visibility in AI search engines like ChatGPT, Gemini, and Perplexity? Fill out the form and our experts will create a personalized strategy for you.

Written by

Devanshu

AI Search Optimization Expert