Six months ago, managing AI visibility for one client felt manageable. You could manually check whether their brand appeared in ChatGPT answers, document citation rates in a spreadsheet, and deliver a handcrafted monthly report. That approach does not scale to ten clients. It definitely does not scale to thirty.

As agencies add AI visibility services to their offerings, the operational question is not whether to measure AI citations - it is how to monitor, optimise, and report across a growing roster without the workload compounding linearly with each new client.

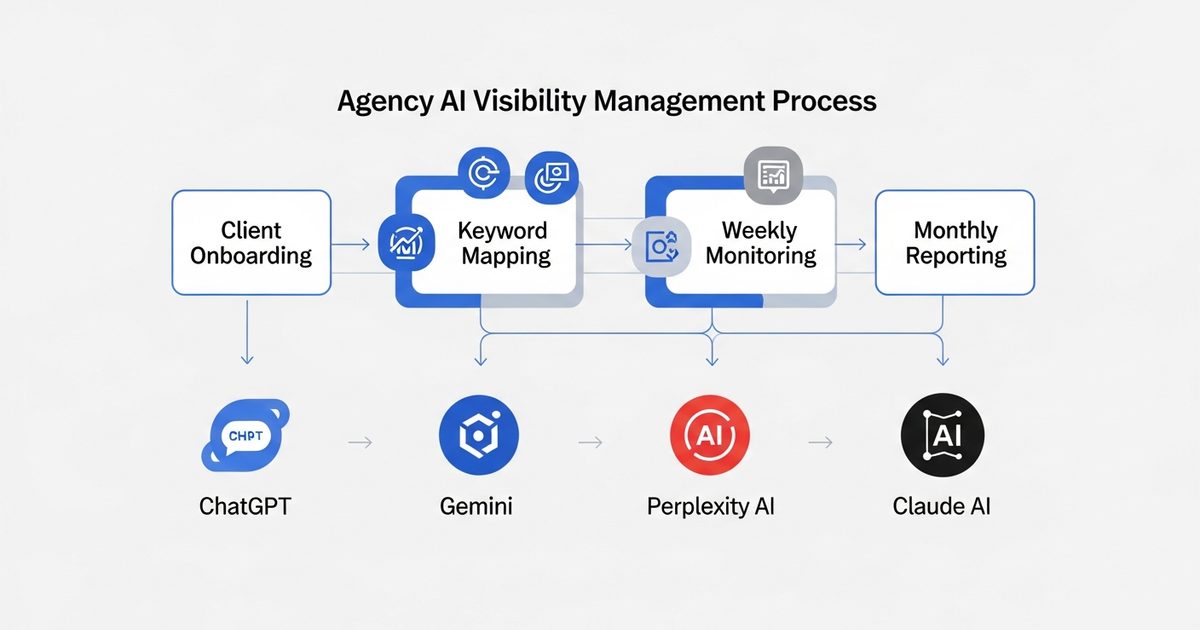

This is the operational framework that scales. It covers tooling, workflows, reporting cadence, and the common mistakes that cause agency AI visibility practices to collapse under their own weight.

Why Multi-Client AI Visibility Management Is Uniquely Hard

Traditional SEO management at scale is a solved problem. Tools like Ahrefs, SEMrush, and Moz are built for agency multi-client use: bulk keyword rank tracking, white-label reporting, client portals, team permissions. The infrastructure for managing 50 client SEO dashboards simultaneously exists and is mature.

AI visibility management does not yet have the same infrastructure maturity - but it is catching up fast. The specific challenges agencies face:

No native multi-client view in AI engines: You cannot log into ChatGPT and see citation rates for 30 clients at once. Manual checking scales at roughly 1 hour per client per week, which is unsustainable.

Different keyword sets per client: Each client has unique category terms, competitor comparisons, and service queries that AI engines might answer about them. Keyword mapping cannot be templated.

Citation rates shift weekly: AI engine citation rates are not as stable as Google rankings. A competitor publishing new content, a product review gaining traction, or an AI engine update can shift citation rates between reporting periods.

Client education burden: Most clients have no existing mental model for AI citation rates. Every reporting cycle requires explaining what the metric means before explaining whether it improved.

The Foundation: One Tool, All Clients

The single biggest operational leverage point is consolidating AI visibility tracking into one platform with genuine multi-client capability. Managing separate manual processes for each client - different spreadsheets, different checking schedules, different reporting formats - is where agency AI visibility practices collapse.

AI Rank Lab's agency tier supports multi-domain management with a unified dashboard that surfaces citation rates across ChatGPT, Gemini, Perplexity, and Claude for all client domains simultaneously. The operational difference this makes:

Weekly citation rate data appears for all clients without manual checking

Competitive benchmarking runs automatically against competitor domains

Alerts flag significant drops in client citation rates before the client notices

Export functions feed into reporting workflows without data entry

If you are managing more than three clients on AI visibility without a dedicated tracking tool, you are already spending more time on measurement than on optimisation. Fix the tooling first.

Client Onboarding: The Keyword Mapping Process

AI visibility tracking is only as useful as the keywords being tracked. The onboarding process for each new client determines the quality of everything downstream.

Step 1: Category research queries

These are the queries where AI engines will recommend solution providers in your client's category. For a project management software client, examples include: "best project management software for remote teams", "top project management tools for agencies", "project management software comparison 2026". Map 5-10 category queries that represent how users find solutions like your client's.

Step 2: Competitor comparison queries

Users frequently ask AI engines to compare specific brands. Map queries like "[Client Brand] vs [Competitor A]", "[Client Brand] alternatives", "[Client Brand] review". These are high-intent queries where your client either appears or does not appear - and where being absent is most commercially damaging.

Step 3: Problem-solution queries

These queries describe the user's problem without naming solution providers: "how to manage remote team projects", "tools for tracking agency client work". AI engines answering these queries will recommend specific tools - your client's job is to be one of those tools.

Step 4: Baseline measurement

Before any optimisation begins, establish baseline citation rates for all three query types across all four AI engines. This baseline is the reference point for measuring improvement and the data that justifies ongoing retainer fees.

The Weekly Monitoring Rhythm

AI citation rates require weekly monitoring, not monthly. Citation rates can shift materially between monitoring cycles, and catching a drop early allows you to investigate and respond before the next client reporting period.

The weekly monitoring process should take no more than 30-45 minutes across all clients when tooling is right:

Check the dashboard summary: Scan for any client showing significant citation rate drops week-over-week (more than 10 percentage points on core queries)

Investigate anomalies: For any client showing a drop, check whether competitors have published new content, whether the AI engine's behaviour on that query type has changed, or whether client content has been updated or removed

Log changes: Record any optimisation actions taken that week and their category (schema update, content publish, third-party mention acquired) so you can correlate actions with citation rate changes over time

Flag client communications: If any client is approaching a reporting date with a notable trend (positive or negative), flag it now rather than discovering it in the reporting process

The Monthly Client Report: What to Include and What to Skip

Client reporting is where agency AI visibility practices often overcomplicate themselves. Monthly reports that run to 20 pages of data confuse clients and bury the metrics that matter. The effective multi-client reporting format is shorter than most agencies default to.

What to include

Overall citation rate: Percentage of tracked queries where the client brand is cited across all four AI engines, month-over-month

By-engine breakdown: Citation rate on ChatGPT, Gemini, Perplexity, and Claude separately - clients often have different performance profiles by engine

Top performing queries: 3-5 queries where the client is being cited consistently, as evidence of traction

Queries needing attention: 3-5 queries where citation rate is low or declining, with one-line explanations of why and what is being done

Actions taken this month: Specific optimisation actions (schema updates, content publishes, etc.) and their connection to citation rate movement

Next month priorities: What will be worked on and why

What to skip

Raw citation frequency counts without context ("ChatGPT cited you 47 times" - meaningless without a rate and a baseline)

Full keyword ranking tables for all tracked queries - aggregate the signal

Screenshots of individual ChatGPT responses - these are illustrative, not analytical

Lengthy explanations of how AI engines work - put this in the client onboarding document, not every monthly report

Common Mistakes That Break Multi-Client AI Visibility Practices

Mistake 1: Using the same keyword set for every client in a category

It is tempting to create a template keyword set for "SaaS clients" or "professional services clients" and reuse it. This fails because AI citation rates are highly specific to how the market talks about each individual client's specific value proposition. A project management tool focused on engineering teams needs different tracking queries than one focused on marketing agencies - even if both are in the "project management software" category.

Mistake 2: Treating all four AI engines as equivalent

ChatGPT, Gemini, Perplexity, and Claude have different citation patterns, different content preferences, and different user demographics. A B2B software client may find their citation rate on Perplexity (skewed toward professional users) is more commercially valuable than their citation rate on general ChatGPT queries. Report by engine, not just in aggregate.

Mistake 3: No correlation tracking between actions and citation rates

Without logging optimisation actions and their dates, you cannot demonstrate that your work is causing citation rate improvements rather than general market trends. Keep a simple action log per client: date, action type, affected pages, and subsequent citation rate movement. This log is both an operational tool and your proof of value at renewal time.

Mistake 4: Measuring AI visibility in isolation from Google performance

AI citation rates and Google rankings influence each other in ways that matter for client strategy. A client with improving AI citation rates but declining Google traffic has a specific problem (AI is building awareness but Google is not converting it). A client with strong Google traffic but low AI citations is vulnerable to funnel drop-off at the awareness stage. Report both metrics together, even if the agency is only responsible for one of them.

Mistake 5: Selling without a baseline measurement

Agencies that sell AI visibility retainers before establishing baseline citation rates have nothing to compare improvement against. Run the baseline measurement before the engagement starts - before the contract is signed if possible. Clients who see their zero-citation baseline are significantly more motivated to invest in improvement than clients who receive abstract promises about AI search.

Pricing and Scope Structure for Multi-Client AI Visibility

The agency economics of AI visibility management become more attractive as client count grows, because the tooling and workflow investment amortises across the roster. A rough structure that works at different client scales:

1-5 clients: Include AI visibility monitoring within existing SEO retainers. The tooling cost per client is low at this scale, and AI visibility adds perceived value without requiring a standalone retainer.

6-15 clients: Break AI visibility into a distinct service line with separate reporting and pricing. At this scale, the workflow investment justifies explicit scope and dedicated pricing.

15+ clients: Systematise reporting with templatised output (fed by tool data), consider hiring or designating an AI visibility specialist on the team, and build a proprietary methodology deck for new client pitches.

Building the Internal Team Capability

AI visibility management requires a different skill set than traditional SEO. The team members delivering this service need to understand how AI engines retrieve and cite content, how schema markup affects citation likelihood, how content structure differs for AI queries versus Google queries, and how to diagnose why a client is or is not being cited.

For agencies building this capability, the practical steps:

Designate one team member as the AI visibility lead - someone who will go deep on AEO methodology and become the internal expert other team members consult

Build a client-facing education resource (a one-pager or short deck) that explains AI citations and why they matter, so you are not re-explaining the concept from scratch in every client call

Establish an internal case study as soon as you have a client showing measurable citation rate improvement - this becomes your primary sales tool for the service

Run monthly internal reviews of citation rate data across all clients to identify patterns (what optimisations are working, what is not) that can be systematised into your agency methodology

The Operational Summary

Managing AI visibility for multiple clients is operationally tractable when the foundation is right: one platform for all citation tracking, a rigorous per-client keyword mapping process at onboarding, a weekly monitoring rhythm that scales to 30 minutes regardless of client count, and monthly reporting that leads with the metrics clients care about rather than data dumps.

The agencies that will build strong AI visibility practices are the ones systematising now - before the market matures and the operational advantage of being an early-mover disappears. The tooling, the workflows, and the client education materials are investments that pay off more with each additional client added to the roster.

Start with the right platform. AI Rank Lab's agency tier gives you the multi-client citation monitoring infrastructure that makes the rest of this framework possible. See how the multi-client dashboard works.

Frequently Asked Questions

How many clients can one team member manage for AI visibility?▾

What tools do agencies need for multi-client AI visibility management?▾

How do you price AI visibility services for agency clients?▾

What should agency AI visibility reports include?▾

How quickly do AI citation rates improve after optimisation?▾

Get a Free AI Ranking Consultation

Want to improve your brand's visibility in AI search engines like ChatGPT, Gemini, and Perplexity? Fill out the form and our experts will create a personalized strategy for you.

Written by

Devanshu

AI Search Optimization Expert